Legal AI accuracy is transforming contract review, AI boosts speed and consistency, while human insight ensures context and strategic judgment.

Your legal team spent 2,000 hours last year reviewing contracts. A new AI tool promises to cut that to 200 hours. The pitch sounds incredible, but it hinges on a question that keeps general counsels up at night: can you actually trust legal AI accuracy compared to human review?

Here's the reality: accuracy is the biggest barrier to adopting AI in legal work. And it's complicated.

The Accuracy Debate: What 2025 Data Really Shows

The short answer? It depends. AI is extremely good at some things and worse at others.

Consider this: one industry benchmark on NDA review found an AI tool achieved 94% accuracy while a team of lawyers managed only 85%. Even more striking, that AI scanned five sample NDAs in 26 seconds while the lawyers needed 92 minutes. This illustrates how AI can dramatically outperform humans in speed on structured, repetitive tasks.

Generic chatbots, by contrast, fail on 50–80% of tough legal queries.

Here's the key insight: accuracy isn't one fixed number. AI can outperform humans on specific, well-defined tasks where human accuracy tends to level off. But its error rate rises on open-ended analysis. The real question is accuracy at what cost, on which task, with what oversight.

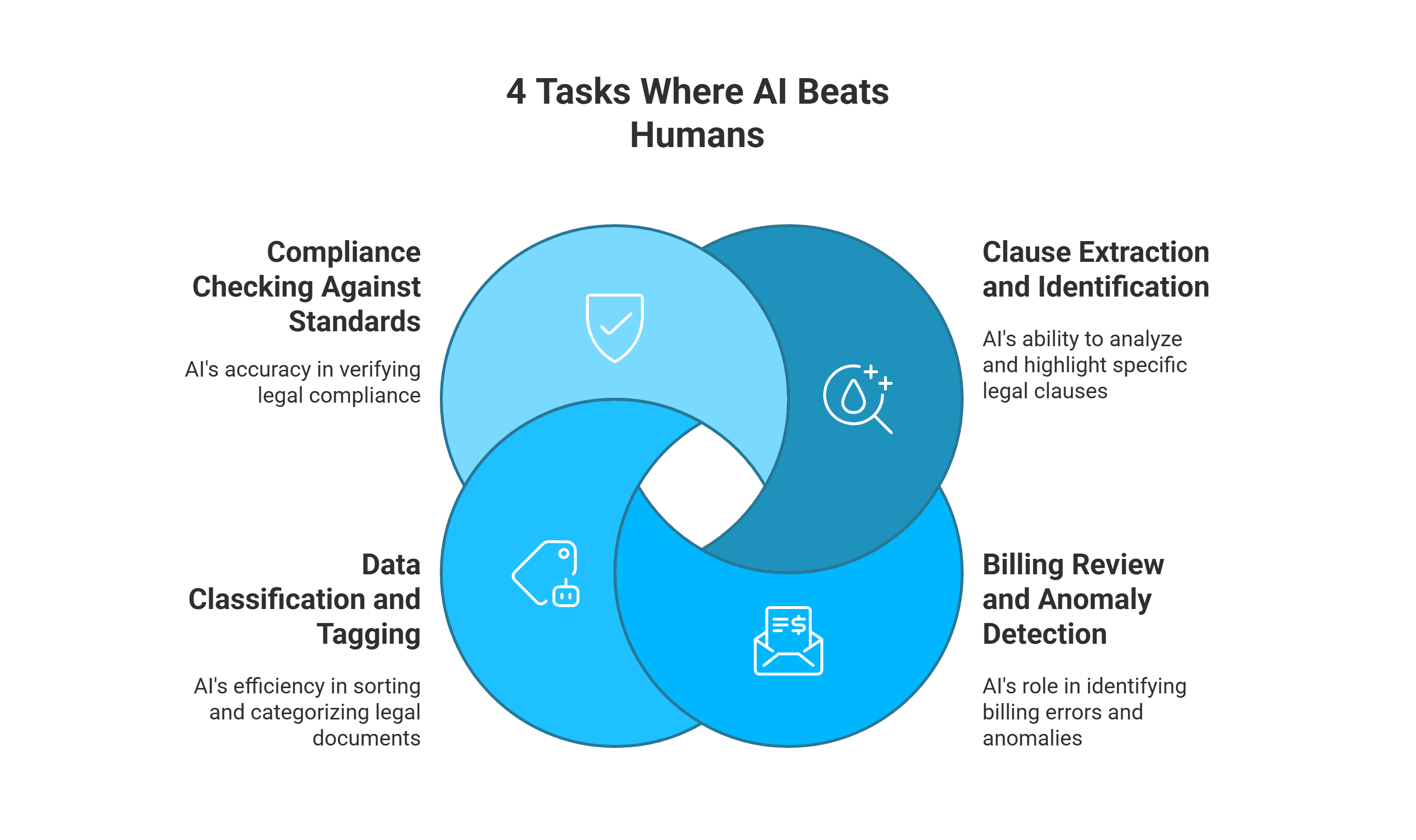

Where AI Dominates: 4 Tasks Where AI Beats Humans

Clause Extraction and Identification

AI excels at pulling out specific clauses in contracts. Modern models accurately spot indemnities, liability caps, or confidentiality clauses, while lawyers tend to miss more of them during long review sessions. An AI tool consistently identifies indemnity provisions across hundreds of contracts, whereas human reviewers start overlooking details as fatigue sets in.

Billing Review and Anomaly Detection

The AI instantly checks every line item, total, and rate. It flags overcharges, duplicate time entries, or out-of-policy billing even at 3 a.m. when people are tired. It will catch a miscalculated total or a strange rate hike that a human might overlook after hours of review.

The advantage here is straightforward: an AI doesn't fatigue or lose focus, so the math is always perfect and no anomaly is missed due to human exhaustion.

Data Classification and Tagging

Document classification is another win for AI. These systems can label contracts by category, jurisdiction, involved parties, and more, eliminating the inconsistencies that arise when multiple human reviewers handle the same task. AI completes this tagging in seconds across hundreds of documents, while a person typically takes several minutes per file.

Compliance Checking Against Standards

Verifying checklists is effortless for AI. It can instantly confirm that all mandatory clauses, like confidentiality, arbitration, and signature blocks are present and correctly formatted. In contrast, even experienced lawyers tend to slow down and miss details after hours of review.

Examples include ensuring a contract has the required consumer-protection clause, that all dates and version numbers are filled, or that regulatory disclosures match company policy. The advantage: exhaustive accuracy. AI flags any missing or outdated element that a distracted human might miss.

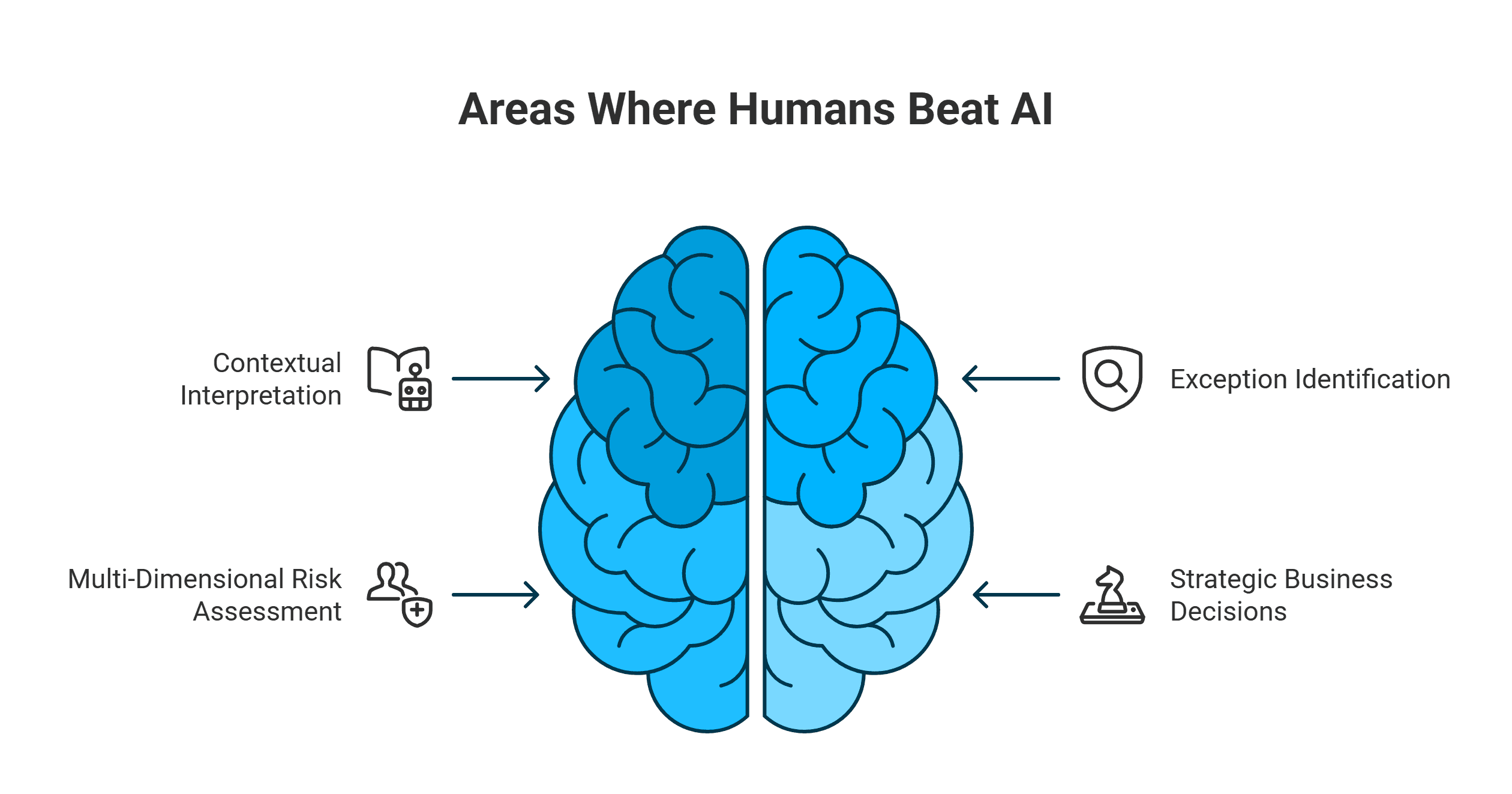

Where Humans Still Lead: 4 Judgment Areas

Contextual Interpretation and Nuance

AI, lacking real-world knowledge and judgment, can't ask or answer that question. A clause that looks strict might be customary in one sector but excessive in another. A lawyer knows the client's risk tolerance, industry norms, and deal strategy. AI does not. A mistake here is strategic, not mechanical.

Exception Identification and Novel Anomalies

When something falls outside the usual pattern, humans have the edge. Lawyers can instinctively sense that "something feels off" even if they can't immediately articulate why. They excel at spotting truly novel issues: an unusual party structure, an atypical risk allocation, or a hidden conflict. These one-off problems often carry big risk.

An AI model trained on existing data might simply ignore or mislabel a novel clause it has never seen before. The risk is critical: one overlooked exception can be devastating.

Multi-Dimensional Risk Assessment

Legal risk isn't one-dimensional, and humans are better at integrating multiple factors. A lawyer weighs many competing considerations simultaneously: how large is this contract, how dangerous is the potential liability, what is our relationship with the counterparty, and what is our appetite for risk?

For example, deciding if a 10 million dollar liability cap is adequate depends on the industry, the client's insurance coverage, and the value of the deal. AI can flag the clause number but it cannot weigh those interconnected business factors. The risk is high: weighing risks in isolation can be misleading.

Strategic Business Decisions

Some tasks require outright negotiation and strategy. Should we push back on this strict indemnity clause or accept it to close the deal quickly? Is it better to waive a minor compliance issue to keep a key customer happy? These answers depend on high-level goals such as preserving a relationship, meeting a deadline, or winning a big client.

AI might highlight a clause but it can't decide trade-offs for your business. The risk is critical: the wrong strategy can cost millions.

The Hallucination Risk: The Uncomfortable Truth

No matter how smart the AI, it can and will confidently invent things. This is fundamentally different from human errors: people might miss a clause, but they don't fabricate a case or create a ghost contract. AI models can and do generate plausible-sounding but false information. They might cite a non-existent case or say "Section 5.2 says X" when no such section exists.

Real examples:

2023, New York: two attorneys sanctioned for filing briefs with six AI-fabricated court cases.

2024, Canada: Air Canada’s chatbot falsely told a customer no bereavement fare existed. The tribunal ordered a refund.

These “hallucinations” can trigger penalties, reputational damage, or worse.

Summary on Judgment Work

In judgment-heavy, context-dependent tasks, humans still hold clear superiority. Yes, lawyers fatigue too, but their errors come from oversight, not fabrication. Until AI truly understands business reality and only outputs verified information, we rely on human intelligence for nuanced decisions.

The Economics: Why Speed and Accuracy Determine the Real Winner

What Human Review Actually Costs

Experienced lawyers' rates often exceed 100 to 200 dollars per hour (associates 75 to 120 dollars per hour; partners 200 to 300 dollars per hour). Reviewing a typical contract of 1 to 2 hours thus costs 125 to 500 dollars. Scale that to 1,000 contracts: at 100 dollars per hour for 2 hours each, that's 200,000 dollars. In practice, many companies spend 125,000 to 500,000 dollars or more annually on heavy contract review workloads. And that's just the base cost.

What AI Review Actually Costs

AI flips the math. A contract-processing AI tool might charge 0.50 to 2.00 dollars per document, often by page. An enterprise legal-AI subscription might be 10,000 to 50,000 dollars per year plus a 5,000 to 20,000 dollars setup fee. Even adding lawyer oversight of say 5 to 10 minutes per contract, the cost per contract is tiny.

For example, processing 1,000 contracts might incur 1,000 dollars in AI usage plus 15,000 dollars license plus approximately 8,000 dollars in lawyer quality-control, roughly 24,000 dollars total. In effect, automated review costs on the order of 10 to 20 cents per contract, often 75 to 95% less than manual review.

Verdict: In hard dollars and hours, the AI-plus-human hybrid workflow wins. You typically recoup the AI investment in months. The key is proper implementation using enterprise-grade AI, encryption, and clear policies, always layering in human review on critical matters. With those safeguards, you get 80 to 90% of the cost and time savings and most of the accuracy with only a sliver of additional risk.

Where AI's True Value Emerges: Large-Scale Analysis

The Fatigue Factor

Human accuracy drops steadily with fatigue. If a lawyer reviews contracts for 8 to 10 hours straight, error rates climb with each passing hour. They might catch approximately 95% of issues in hour one to two but only approximately 70 to 80% by hour seven to eight. By the 75th contract of the day, they could be 20 to 25% less accurate than at the start.

AI, in contrast, has no off-switch. It can process 1,000 contracts and maintain approximately 94% accuracy throughout. In large batches, humans cumulatively miss far more simply because they slow down whereas AI stays consistent.

Consistency: The Hidden Advantage

Different lawyers can interpret the same clause in multiple ways. One might call it a force majeure while another calls it an act of God, with varying risk ratings. AI, however, applies the same rule every time. Over thousands of documents, this uniformity pays off.

If an AI tags every nondisclosure agreement reliably, you avoid the situation where one reviewer calls something an NDA and another does not. Consistency at scale often matters more than nominal accuracy: it means the legal team makes decisions based on uniform data.

Pattern Recognition at Scale

AI finds patterns humans simply can't in bulk. Need to know which customers never accept your indemnity clause? Or how often you include a price escalation in big deals? AI can scan 10,000 contracts and spit out insights in minutes. Humans would spend months.

In fact, AI benchmarks show it often answers complex contract analysis questions in seconds that take lawyers 20 plus minutes, usually outperforming on tasks like summarizing precedent or linking issues across documents. These macro-level insights (for example "Our deals in industry X added this clause 90% of the time last year") are uniquely AI's strength and drive better strategy.

Speed at Scale

The time savings compound with volume. Imagine 60 lengthy RFP compliance checks, each 100 pages with 45 criteria. Manually that's approximately 150 hours (2.5 hours each). AI can do them in approximately 3 hours total (3 minutes each). You've freed approximately 147 hours, a 10 to 15 thousand dollar labor value, for more valuable work.

The more documents, the greater the advantage: AI adds only minutes per document while humans add hours. This means that at high volumes (500 plus documents) AI isn't just a little faster: it's exponentially faster.

The Hybrid Model at Scale

The winning formula is AI plus human. Let AI handle bulk and humans handle nuance. For example, an AI can process 1,000 contracts in minutes (extracting clauses, flagging red flags). Then a legal team might spend 10 to 15 hours total reviewing just those flags versus 120 plus hours if they read every contract from scratch.

In one scenario, full manual review of 1,000 contracts cost 125,000 dollars (1,200 hours). AI-assisted review (AI fees plus 15 hours attorney time) cost about 22,000 dollars (150 hours). That's an 82% cost and time cut with no loss in real accuracy. In practice, AI turbocharges ouput and consistency while lawyers apply final judgment. For high volumes, this hybrid workflow wins every time.

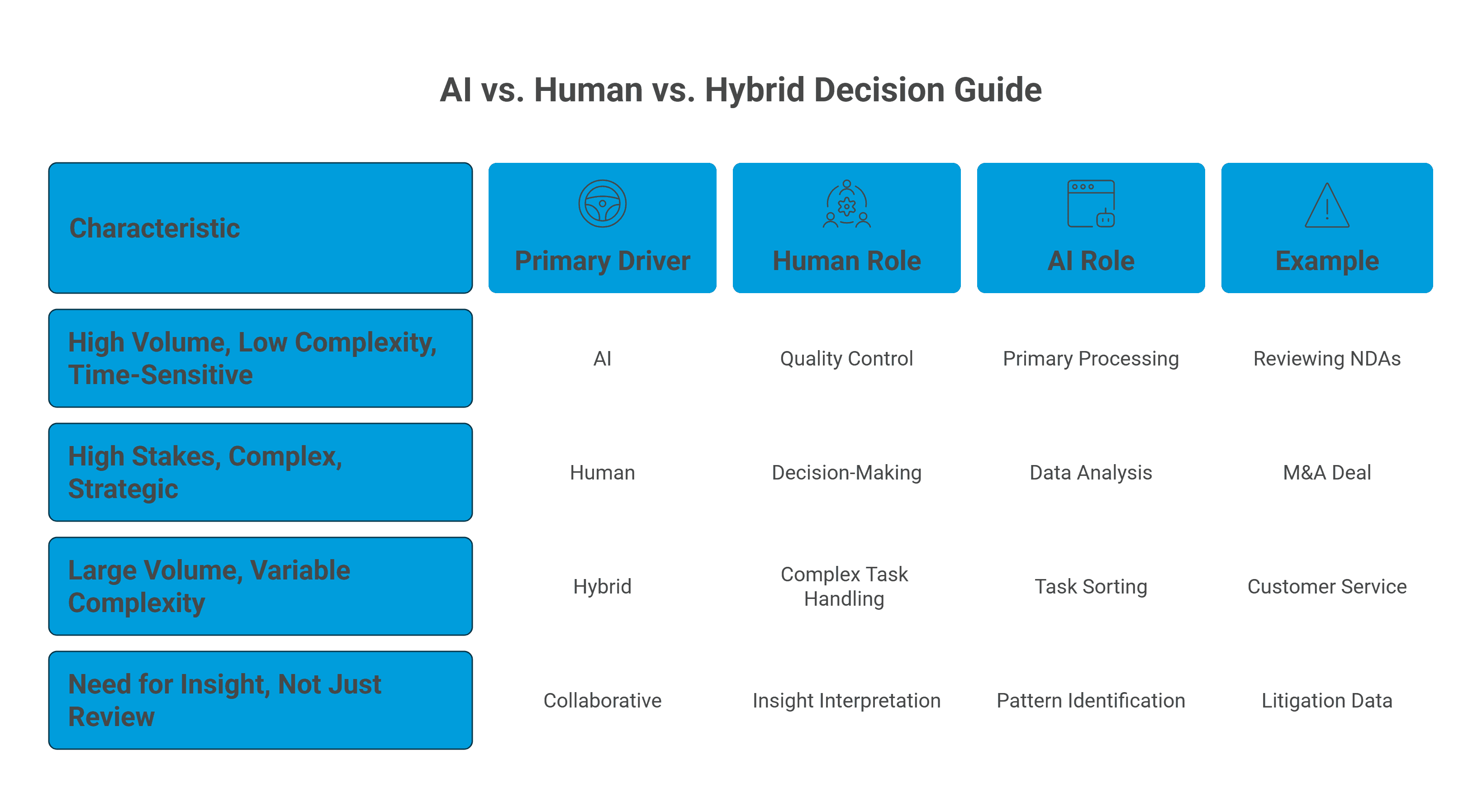

When to Use AI vs. Human vs. Hybrid

A common challenge in legal AI adoption is deciding who or what should handle each task. Here’s a simple way to think through the options and make smarter choices:

High Volume, Low Complexity, Time-Sensitive

Examples: NDAs, billing reviews, RFP compliance checks.

Tip: Use AI as the primary reviewer with light human quality checks. Expect exceptionally high accuracy and significant savings in both time and cost.. In bulk tasks, speed and consistency matter more than nuance, minor errors can be fixed later.

High Stakes, Complex, Strategic

Examples: M&A due diligence, JV contracts, partnership deals.

Tip: Choose a human-led review supported by AI research tools. Senior counsel should lead for near-perfect accuracy, with AI accelerating background analysis. When the stakes are high, judgment and context outweigh speed.

Large Volume, Variable Complexity

Examples: Vendor contracts mix (routine renewals + custom terms).

Tip: Use a hybrid triage model—AI clears the routine 80%, while lawyers handle the complex 20%. This approach combines AI’s efficiency with human discernment.

Need for Insight, Not Just Review

Examples: Contract analytics, compliance trends, vendor performance.

Tip: Deploy AI for pattern recognition and humans for interpretation. AI spots risks and trends across portfolios; lawyers translate them into strategic action.

From Theory to Practice: What Actually Matters

Data Privacy and Confidentiality

With legal documents, strict controls are mandatory. Ask any AI vendor: Is all data encrypted in transit and at rest? Can I deploy on-premises or on a private cloud? Are you prohibited from using our data to train your general model? What's your data retention policy and who can access it?

Ideally, use enterprise-grade legal AI with explicit privacy guarantees. Avoid public ChatGPT or any platform that might leak client data. Only trust solutions that meet your firm's security standards and preserve attorney-client privilege.

Hallucination Risk and Verification

Plan for the AI's tendency to make things up. Mitigation should include retrieval-augmented models (so it can only answer from your actual documents or approved sources) and required sourcing of answers (so you can fact-check). Establish a workflow where lawyers spot-check a sample of AI outputs before relying on them.

For example, review 10 to 20% of AI-generated clauses or answers against the source document. That small overhead (a few minutes per check) catches approximately 95% of AI mistakes. In practice, treat AI output as a draft or suggestion: always have a human lawyer confirm the key points before relying on them.

Change Management and Team Buy-In

Even the best AI fails if the team won't use it. Involve your lawyers early: survey their pain points and set realistic goals. Run demos on real examples so they see AI in action and build trust. Emphasize that AI is a tool to augment their work, not replace them. It will catch trivial items so they can focus on high-value tasks.

Provide training and champions to help. Track metrics (hours saved, issues found) and celebrate quick wins. Surveys and case studies show up to 95% of AI projects fail due to poor adoption, not technology limitations. The key is people and process: treat AI implementation like any other change management project.

Accuracy Benchmarking for Your Use Case

Not all AI is equal. Don't just rely on vendor claims. Do a proof-of-concept on your own documents: take 50 to 100 typical contracts and have lawyers annotate the outcomes (ground truth). Then run the AI on them. Measure its accuracy and error types directly.

This will reveal both performance and hallucination rates specific to your context. You might tweak the AI (custom prompts or additional training data) or switch providers based on these results. A 2 to 4 week pilot with real data is invaluable: it gives you confidence (or raises red flags) before full rollout and helps calculate your actual ROI.

Integration with Existing Workflows

The AI tool should fit into your world, not force you to rebuild it. Check if it integrates with your contract management or document systems. Does it offer a Word plugin, a web portal, or an API to your CLM? How will lawyers access AI suggestions in their daily routine? What about paper or scanned contracts?

Plan a gradual rollout: maybe start with AI in shadow mode or a small team. Ensure there's a clear process for handling contracts the AI can't process (hand those back to humans). The goal is seamless adoption, for example having AI flags show up in the same system where lawyers already work.

Professional Responsibility and Ethics

Remember: you are responsible for any legal work product. ABA rules effectively treat AI as a tool under your supervision. Model Rule 1.1 (competence) implies attorneys must understand how to use AI properly. Rule 5.3 (non-lawyer assistance) means you oversee the technology's output. Rule 1.6 (confidentiality) means safeguarding client data in these systems.

Ensure clear policies: who can use AI, how to document its use, how to verify results. Check your malpractice insurance: does it cover AI-related errors? In practice, view AI as a junior associate that needs supervising: always do a final human check on important documents and keep records of AI assistance.

Related Article: How to Use AI in Legal Document Management

The Honest, Nuanced Answer

AI wins at highly defined tasks. In areas like clause extraction and compliance checks, AI often surpasses lawyers. For instance, we've seen AI hit approximately 94% accuracy on NDA clause review versus approximately 85% for humans and 96 to 98% on compliance audits (versus approximately 90% for humans). In these domains, AI delivers consistent accuracy every time while humans tire out. At high volume, that means AI just plain catches more stuff and much faster.

Humans win at judgment. When interpretation, strategy, or context are needed, lawyers remain far more reliable. No AI today can match that for things like evaluating the reasonableness of a clause or making a high-stakes negotiation call.

At scale, hybrid dominates. The sweet spot is AI plus human oversight. AI handles thousands of pages in minutes; humans double-check and make final calls.

The Bottom Line

For large-scale document analysis, the accuracy question favors a hybrid AI-plus-human approach. You can save 80 to 90% of time and money while actually reducing errors, provided you implement it responsibly. The technology is ready. The question is whether your team is.

Frequently Asked Questions

Q: Can AI really review contracts as well as lawyers?

A: It depends on the task. AI is better at repetitive, structured tasks (for example clause finding, compliance checks) and similar on straightforward reviews. Humans are better at nuanced, judgment calls. In practice, AI will outperform on things like extracting clauses or validating formats but a lawyer still wins on interpreting complex provisions and strategy.

Q: What about hallucinations? Can I trust AI?

A: Hallucinations are real. Mitigate them by using enterprise-grade AI with retrieval from your actual documents, requiring source citations, and having lawyers spot-check results. With those safeguards (AI that cites sources plus human review), the risk falls to low single-digit error rates.

Q: Will AI replace legal jobs?

A: Unlikely. AI will transform jobs, not eliminate them. Repetitive review tasks shift to AI and lawyers become quality controllers and strategists. Attorneys who adapt will handle higher-value work while others may be left behind. The lawyers of the future will focus on what AI can't do: empathy, strategy, and nuanced judgment.

Q: How much does legal AI cost?

A: Enterprise legal AI typically costs 10 thousand to 50 thousand dollars per year in subscription (depending on scale and features) plus a one-time setup of 5 thousand to 20 thousand dollars. Per-contract processing adds a few cents. Compare that to 125 thousand to 500 thousand dollars in lawyer fees for 1,000 contracts. In one example, AI plus QC cost 22 thousand dollars versus 125 thousand dollars manual: an ROI payback of just a couple of months.

Q: Is my client data safe with AI?

A: Only if you use a secure, enterprise-grade solution. Don't use public ChatGPT for confidential work. Demand end-to-end encryption, on-premises or private cloud options, no training on your data, and strict access controls. Ensure the vendor won't see or reuse your contracts. If those conditions are met, data can be kept safe under attorney-client privilege.

Q: Do I need to change my team to use AI?

A: Yes. Cultural change is critical. Most AI rollouts fail due to resistance. Involve your team early, get their buy-in, provide training, and show them how AI reduces drudgery. Assure them AI is there to help, not replace them. Reward and recognition of improved efficiency also go a long way.

Q: What if AI makes a mistake in a critical contract?

A: That's why humans are in the loop. Always have a lawyer review any AI output before sending it to a client or counterparty. Spot-checking catches the vast majority of AI errors. Ultimately, you remain responsible for the content so use AI as an assistant with oversight, not as an unsupervised paralegal.

Q: What's the best way to start with legal AI?

A: Start small and measurable. Pick a high-volume, well-defined task. Assemble a test set (50 to 100 documents). Pilot an enterprise legal AI and measure its accuracy and time savings. Compute the ROI. Use the results to refine your approach then gradually expand. With a 2 to 4 week pilot and iterative improvement, you can chart a clear path to an efficient AI-augmented review process.